After years of delays, broken promises, and falling behind rivals like ChatGPT and Google Assistant, Apple is finally making its boldest move yet. Apple reinvents Siri from the ground up — and this time, the overhaul is real, radical, and powered by Google’s most advanced AI.

Table of Contents

Why Apple Reinvents Siri Now — and Why It Took So Long

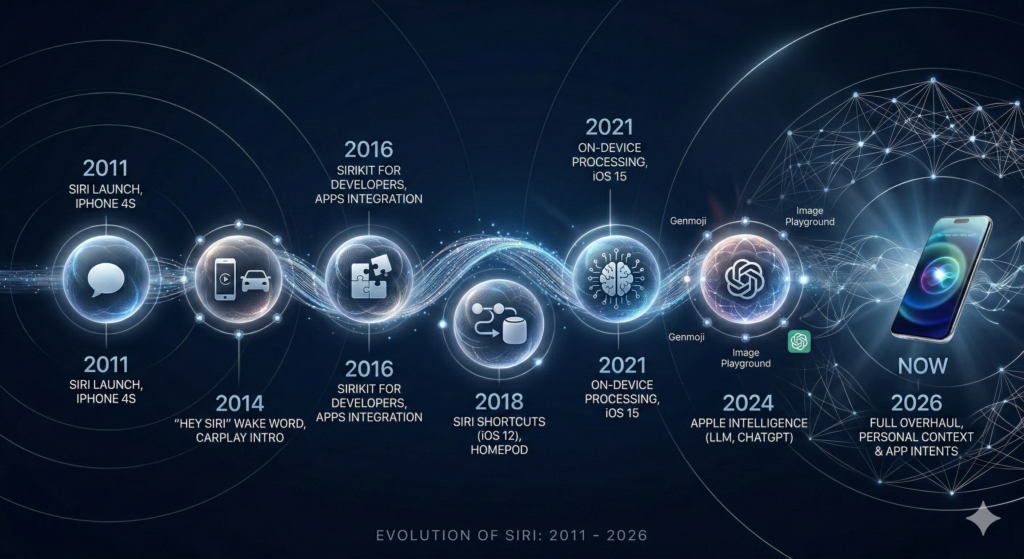

When Apple introduced Siri back in 2011, it was a genuine revolution. No smartphone had ever offered a voice assistant quite like it. But over the following decade, while Google Assistant, Amazon Alexa, and eventually ChatGPT raced ahead with increasingly powerful AI capabilities, Siri stayed frustratingly stuck. Simple questions, missed commands, and a complete inability to understand context made Siri the butt of jokes rather than a genuinely useful tool.

That story is now changing. Apple reinvents Siri in 2026 with the most ambitious redesign the assistant has ever seen — a full rebuild powered by next-generation AI, deep system integration, and a landmark partnership with Google that gives Siri a brain worthy of an iPhone.

The journey here has not been smooth. Apple first unveiled a reimagined Siri at WWDC 2024 as part of its broader Apple Intelligence initiative. The company promised features that would let Siri understand personal context, perform actions inside apps, and interact with your entire device in a much smarter way. But internal testing revealed unacceptable error rates and inconsistent performance. Apple’s software chief Craig Federighi admitted that the new Siri “did not reach the level of reliability Apple wanted,” and the entire rollout was delayed — first from 2025, then repeatedly through early 2026.

Apple even pulled its own television advertisements promoting the unreleased Siri features, a move one Apple executive reportedly called “especially ugly.” The company’s stock dropped five percent on a single day in February 2026 when Bloomberg’s Mark Gurman reported further delays. But Apple CEO Tim Cook remained firm: the new Siri is coming in 2026, and this time, Apple is getting it right.

“We’re also excited for a more personalised Siri. We’re making good progress on it.”

— Tim Cook, CEO, Apple

What’s Actually New? The Key Features of the Reimagined Siri

The new Siri is not an incremental update. When Apple reinvents Siri, it rebuilds the assistant with a completely different philosophy — one where Siri actually understands you, your apps, and your context rather than just responding to individual commands in isolation.

Here are the headline features confirmed for the new Siri:

Personal Context Understanding

The new Siri can read and understand information stored across your device — emails, messages, photos, calendar entries, files, and notes — and use that context to give smarter, more relevant answers. Ask Siri for your driving licence number and it can retrieve it from a saved photo. Ask it to find a podcast episode a friend recommended and it will search your message threads to find it. This is the kind of genuinely useful intelligence that makes Siri feel less like a search engine and more like a personal assistant who actually knows you.

App Actions Without Opening Apps

One of the most exciting features confirmed for the new Siri is the ability to perform actions inside your apps without you ever having to open them. Apple demonstrated use cases where users could instruct Siri to find a photo, edit it, and save it to a specific folder — entirely by voice, without touching the Photos app. This seamless app control is exactly the kind of deep system integration that sets Apple apart from third-party AI assistants running on top of other platforms.

World Knowledge Answers

Apple has been developing an internal system codenamed “World Knowledge Answers” that transforms Siri into a genuine AI answer engine. Instead of returning a list of search results, the new Siri delivers rich, intelligent summaries combining text, images, and relevant information in a single response. This puts Siri on direct competitive footing with ChatGPT, Perplexity, and Google’s AI Overviews.

Chatbot-Style Conversations

The new Siri will support back-and-forth, conversational interactions in a ChatGPT-style format. You will be able to follow up questions, refine requests, and have Siri maintain context across a conversation — rather than having to repeat yourself with every new query as the old Siri required.

The Google Gemini Partnership: Apple’s Secret Weapon

Perhaps the most surprising development in the story of how Apple reinvents Siri is the identity of its AI partner. In early 2026, Apple and Google jointly announced a landmark multi-year agreement: Google’s Gemini AI models will power the next generation of Apple’s Foundation Models — the intelligence layer at the heart of the new Siri.

The deal is reported to be worth approximately $1 billion per year to Google, making it one of the largest AI licensing agreements in history. For context, Google already pays Apple billions of dollars annually to remain the default search engine in Safari — and now it has secured an additional, arguably more significant, role at the center of Apple’s AI strategy.

Apple’s joint statement with Google explained the reasoning plainly:

“After careful evaluation, Apple determined that Google’s AI technology provides the most capable foundation for Apple Foundation Models and is excited about the innovative new experiences it will unlock for Apple users.”

This is a remarkable admission from a company that has historically prided itself on controlling every layer of its technology stack. Choosing to build on Google’s Gemini rather than developing its own foundation model from scratch reflects both the extraordinary difficulty of training frontier AI and Apple’s determination to deliver quality over speed.

The partnership does not replace ChatGPT’s existing role within Apple Intelligence — OpenAI’s model continues to handle certain text and image generation tasks. Apple has also reportedly held discussions with Anthropic and Perplexity about potential integrations. The emerging picture is of an Apple AI ecosystem that draws on multiple best-in-class models for different tasks, while Gemini serves as the core intelligence layer for Siri itself.

On-Screen Awareness: Siri Finally Understands Your Entire iPhone

One of the most transformative capabilities in the new Siri is what Apple calls “on-screen awareness” — the ability for Siri to see and understand everything currently displayed on your screen, and take action based on that context.

In practical terms, this means Siri can look at a webpage you are reading, a message you have received, or a map you are viewing, and respond intelligently to questions about what is on screen — without you having to describe or paste the content. You could ask Siri to summarize a long article you are reading, translate a foreign language text appearing in an image, or add an address visible in a message directly to your contacts or calendar.

This capability transforms Siri from a voice-activated shortcut into a genuine intelligent layer across the entire iOS experience — contextually aware, genuinely helpful, and capable of acting on information regardless of which app it appears in.

Cross-App Integration: Do More Without Lifting a Finger

The old Siri was essentially app-blind. It could open apps and perform a handful of predefined actions within each, but it had no understanding of how apps related to each other or how to move information between them intelligently.

The new Siri changes this entirely. With seamless cross-app integration, the reimagined assistant can:

- Read a flight confirmation in your email and add it to your calendar automatically

- Understand an appointment location in your calendar and cross-reference Maps and real-time traffic to suggest what time you should leave

- Retrieve a document from Files and share it in a message thread — all in one voice command

- Perform complex, multi-step tasks that previously required opening several apps manually

This level of integration is only possible because Apple controls both the hardware and the operating system — a structural advantage that no third-party AI assistant can replicate on iPhone.

The Release Timeline: When Will You Get the New Siri?

The rollout of the new Siri is happening in phases throughout 2026, rather than as a single launch event. Here is what the timeline currently looks like:

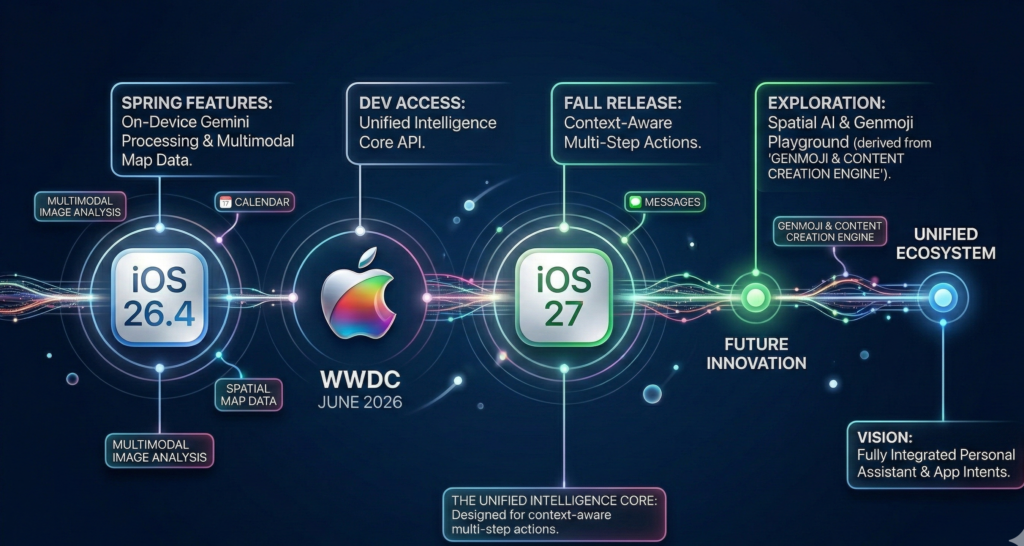

iOS 26.4 (Spring 2026): The first wave of new Siri features — including improved personal context understanding and World Knowledge Answers — were originally targeted for this update. However, Bloomberg’s Mark Gurman reported in February 2026 that some features may be pushed to iOS 26.5 due to testing issues. Apple has confirmed the update is still on track for 2026 without committing to a specific date.

WWDC 2026 (June): Apple is expected to showcase the full scope of the new Siri — including Gemini-powered features and the chatbot-style interface — at its annual Worldwide Developers Conference in June 2026.

iOS 27 (September 2026): The most substantial Siri capabilities, including deep cross-app integration and the full ChatGPT-style conversational experience, are expected to arrive with iOS 27 and the launch of the iPhone 18 series in autumn 2026.

Apple has until the end of December 2026 to deliver on the timeline it publicly committed to in summer 2025 — and the company has consistently said it remains on track.

How the New Siri Compares to ChatGPT and Google Assistant

| Feature | New Siri | ChatGPT | Google Assistant |

|---|---|---|---|

| On-device privacy processing | ✅ Yes | ❌ Cloud only | ⚠️ Partial |

| Deep iOS system integration | ✅ Full | ❌ Limited | ❌ Limited |

| Cross-app actions | ✅ Yes | ❌ No | ⚠️ Limited |

| Personal context (emails, photos, files) | ✅ Yes | ❌ No | ⚠️ Google Workspace only |

| Conversational back-and-forth | ✅ Yes (new) | ✅ Yes | ✅ Yes |

| Powered by frontier AI models | ✅ Gemini + Apple | ✅ GPT-4o | ✅ Gemini |

| Available across Apple devices | ✅ iPhone, iPad, Mac, Watch | ❌ App only | ❌ App only |

The new Siri’s biggest competitive advantage is not raw AI capability — Google and OpenAI both have excellent models — it is depth of integration. No AI assistant can do what Siri will be able to do on iPhone because no other AI assistant lives inside the operating system at the same level.

What This Means for iPhone Users

If you are one of the more than one billion active iPhone users worldwide, here is what the reinvented Siri means for your daily life:

You will use Siri more. The single biggest reason people stopped using Siri was that it was unreliable. When you could not trust it to understand a simple request, you stopped asking. The new Siri — with its dramatically improved language understanding and contextual awareness — is designed to be genuinely trustworthy for everyday tasks.

Your privacy is still protected. Apple has committed to on-device processing for personal data, meaning your emails, messages, and photos are analyzed on your iPhone rather than sent to external servers. Gemini-powered features that require cloud processing will be handled under Apple’s strict data privacy agreements with Google.

Your apps will feel smarter. Even apps that do not directly integrate with Siri will benefit — because Siri can now read on-screen content from any app and take action based on what it sees. The intelligence comes to your content, rather than requiring your content to come to a specific app.

The iPhone ecosystem gets stickier. For people considering switching from Android, the new Siri — deeply woven into Apple hardware and software in ways no Android phone can replicate — will become an increasingly compelling reason to stay within the Apple ecosystem.

Key Takeaways: Apple Reinvents Siri in 2026

When Apple reinvents Siri, it is not just updating a voice assistant — it is fundamentally changing what a smartphone assistant can be. Here is a quick summary of everything covered in this article:

- Apple reinvents Siri with a complete rebuild powered by Google’s Gemini AI models, in a deal worth approximately $1 billion per year

- The new Siri features personal context understanding, reading emails, messages, photos, and files to give smarter answers

- On-screen awareness lets Siri see and act on anything displayed on your screen across all apps

- Cross-app integration allows Siri to perform complex, multi-step tasks spanning multiple apps by voice command

- World Knowledge Answers turns Siri into an AI answer engine delivering rich summaries instead of search results

- The rollout happens in phases: iOS 26.4 (Spring), WWDC showcase (June), and iOS 27 (September 2026)

- Apple maintains its privacy-first approach with on-device processing for personal data

- The new Siri’s strongest advantage is depth of iOS integration — something no competing AI assistant can match

After years of frustration, the reinvented Siri is Apple’s most important software initiative in a decade. Whether it fully delivers on its ambitious promise will become clear over the coming months — but the foundation being laid in 2026 could define the iPhone experience for years to come.